Climate change is one of the external drivers in Willamette Envision. Daily weather data, such as temperature and precipitation, become input variables for biophysical and human systems modeling components as they simulate land and water system changes over the 21st century. Although every projection made by global climate models indicates a warmer climate, the range of plausible projections is wide, and how they will play out in the Willamette River Basin climate is unknown. To account for the large uncertainty in projected climate, the WW2100 project selected three representative scenarios (High Climate Change, Reference, and Low Climate Change) spanning the range of projections in temperature while including variability in precipitation changes. The WW2100 team then used the daily weather conditions predicted by these three scenarios as model forcings for WW2100 simulations. This page describes the process that we, as the WW2100 climate team, used to select and process data from global climate models so that it could be used as input variables for Willamette Envision. It also summarizes key characteristics of future climate conditions predicted by these models.

Our approach involved the following steps:

- Assessment of the best available climate models for the Pacific Northwest.

- Selection of greenhouse gas emissions scenarios.

- Selection of three representative climate scenarios using a sensitivity approach.

- Tailoring resulting data to the Willamette River Basin by employing an approach called downscaling.

Our analysis indicates that by 2100, the Willamette River Basin will be between 1° C (2° F) to 7° C (13° F) warmer than today. WW2100’s three representative climate scenarios closely span the spread of this uncertainty and range from 6° C (10.5° F) warming for the High Climate Change scenario, to 1° C (2° F) warming for the Low Climate Change scenario. The Reference Case scenario represents the middle of this range with 4° C (7.5° F) warming over the century.

Select Findings from Climate Analysis

By 2100, the Willamette River Basin is projected to be between 1° Celsius (2° Fahrenheit) and 7° C (13° F) warmer than in the recent past (1950-2005). Here we summarize climate projections for the Willamette River Basin determined from analysis and downscaling of global climate models and provide context for the three WW2100 climate scenarios.

Temperature

-

By 2100, the Willamette River Basin is projected to be between 1° C (2° F) and 7° C (13° F) warmer than today. This conclusion is based on two greenhouse gas (GHG) concentration pathways, also called emissions scenarios, with output from 20 global climate models (GCM) from the Coupled Model Intercomparison Project Phase 5.

-

WW2100’s three representative climate scenarios closely span the spread of this uncertainty and range from 6° C (10.5° F) warming for the High Change Climate (HighClim) scenario to 4° C (7.5° F) warming for the Reference Case scenario to 1° C (2° F) warming for the Low Change Climate (LowClim) scenario.

-

Warming from increasing anthropogenic GHG concentrations dominates the long-term variability in temperature. Projected temperature increases on the decadal scale (or decades-long scale) exceed natural variability such that the Willamette River Basin does not experience the climate of the latter 20th century during any decade from the present through 2100 (and beyond).

- The summer months of July through September, already the warmest months of the year, are projected to warm most under climate change, by about 2° C °(3.6° F) more than in winter.

Figure 3. The four RCPs and their emissions trajectories over the 21st century. The largest uncertainty in climate modeling is how much greenhouse gas (GHG) humans will continue to emit into the atmosphere and thus how much trapped energy from the sun will continue to heat the atmosphere. To account for this uncertainty, the United Nation’s Intergovernmental Panel on Climate Change (IPCC) has created four emissions scenarios, called the Representative Concentration Pathways (RCPs), to help streamline climate modeling. (RCPs replace the older Special Report on Emissions Scenarios [SRES].) The RCPs are: RCP 8.5, RCP 6, RCP 4.5, and RCP 2.6. The four RCPs represent different GHG concentrations. Higher numbers represent a greater degree of additional radiative forcing above preindustrial levels by 2100 (RCP 8.5 is bigger than RCP 6 and so on). For WW2100, we employed two RCPs: RCP 8.5 (the high emissions scenario, which assumes human industry will continue to emit greenhouse gases at a growing rate) and RCP 4.5 (a middle-of-the-road scenario in which emissions will be curbed starting in the middle of the 21st century).

Figure 4. Temperature projections for the Willamette Basin with WW2100 scenarios. Differences in annual temperature for 1950-2100 from a historical baseline (mean of 1950-2005). Results are from 40 downscaled climate simulations, employing 20 CMIP5 GCMs and two GHG concentration pathways (RCP 4.5 and RCP 8.5). The simulated historical temperatures (with known GHG concentrations) are shown in gray. Future projections with assumed GHG concentrations are color-coded: yellow for the lower GHG concentration pathway (RCP 4.5) and red for the high GHG concentration pathway (RCP 8.5); orange denotes where two RCPs intersect. Overlaying this are WW2100’s representative climate scenarios: HighClim, Reference, andLowClim. The representative scenarios are combinations of three climate models (HadGEM2−ES, MIROC5, and GFDL−ESM2M) run with RCP 4.5 and RCP 8.5: The HighClim scenario was run with RCP 8.5; the Reference scenario was run with RCP 8.5; and the LowClim scenario was run with RCP 4.5. (See Fig. 1 for explanation of RCP emissions scenarios.) Note: the three representative scenarios track closely the range of uncertainty resulting from the multi-model ensemble runs.

Figure 5. Change in mean temperature. Changes in mean temperature by month for the period 2050-2099 from the historical period 1950-1999 for the Willamette River Basin. The October-to-October timeframe shows the water year, which runs from 1 October to 30 September of any given year. Note: WW2100’s representative climate scenarios largely span the range of the uncertainty of the projected temperature changes at the high, medium, and low ends of the temperature distribution. HighClim is represented in blue; Reference Case in represented in purple; LowClim is represented in pink.

Precipitation

- The majority of climate scenarios show a general trend of wetter winters and drier summers in the Willamette River Basin. However, unlike with temperature projections that uniformly show temperatures will rise, climate models do not unanimously simulate either a drier or wetter future.

- Increases in winter precipitation stem mainly from heavier precipitation during wet periods, not an increase in the frequency of precipitation.

- Natural variability will remain large relative to the greenhouse gas response, even at the decadal scale, so that yearly and decadal precipitation both above and below the historical averages should still be expected.

- Due to rising temperatures, precipitation is increasingly likely to fall as rain instead of as snow, resulting in a decreased snowpack. The snowpack (measured as snow water equivalent: SWE) as a proportion of cumulative water-year precipitation (P) is expected to decline markedly across the region. A parallel study by members of the WW2100 team shows that those sub-basins that historically receive the most snow, such as North Santiam, have projected winter (December, January, and February) declines of one-quarter to two-thirds in SWE/P by about the mid-21st century. Sub-basins with little snow currently, such as Middle Willamette, are projected to receive virtually no snow in the future. The small projected increases in total winter precipitation provide little offset to the loss in snow due to projected warming

- For every 1° C (~2° F) increase in annual mean temperature, there is a roughly 15 percent decrease in summer flow in the lower Willamette River Basin. However, as temperatures get significantly higher than the historical average, the spring snowpack is essentially absent. Thus, additional temperature increases have only a marginal effect on streamflow.

Figure 6. Projected differences (as percentages) in annual precipitation for 1950-2100 from a historical baseline (mean of 1950-2005). Here the zero line represents historical climate; changes above and below the line represent more or less precipitation respectively. Note: The majority of climate models run for WW2100 are projecting changes to annual precipitation that do not deviate largely from our region’s historical climate. This means natural variability is expected to play a larger role in precipitation trends into the future than forcing from GHGs. However, the majority of models are trending toward wetter winters and drier summers (See Fig. 7). The simulated historical precipitation (with known GHG concentrations) is shown in gray. Future projections with assumed GHG concentrations are color-coded: pale blue for the lower emissions scenario (RCP 4.5) and dark blue for the high emissions scenario (RCP 8.5); medium blue denotes where two RCPs intersect. Overlaying this are WW2100’s representative climate scenarios: HighClim, Reference, and LowClim. The representative scenarios are combinations of three climate models (HadGEM2−ES, MIROC5, and GFDL−ESM2M) run with RCP 4.5 and RCP 8.5: The HighClim scenario was run with RCP 8.5; the Reference scenario was run with RCP 8.5; and the LowClim scenario was run with RCP 4.5. Note that the three representative scenarios include both drier and wetter than average periods.

Related Publications & Links

- Rupp, D. E., Abatzoglou, J. T., Hegewisch, K. C., & Mote, P. W. (2013). Evaluation of CMIP5 20th century climate simulations for the Pacific Northwest USA. Journal of Geophysical Research: Atmospheres, 118(19).

- Vano, J. A., Kim, J. B., Rupp, D. E., & Mote, P. W. (2015). Selecting climate change scenarios using impact‐relevant sensitivities. Geophysical Research Letters, 42(13), 5516-5525.

- MACA Statistically Downscaled Climate Data from CMIP5

- Pacific Northwest Climate Impacts Research Consortium (CIRC)

- Oregon Climate Change Research Institute

Contributors to WW2100 Climate Research

- Philip Mote, Oregon State University (OSU) Oregon Climate Change Research Institute (OCCRI) and the Pacific Northwest Climate Impacts Research Consortium (CIRC) (lead)

- David Rupp, OSU OCCRI/CIRC

- Anne Nolin, OSU College of Earth, Ocean, and Atmospheric Sciences

- Kathie Dello, OSU OCCRI/CIRC

- Julie Vano, OSU OCCRI/CIRC

- Dennis Lettenmaier, formerly University of Washington CIRC

- John Abatzoglou, University of Idaho (UI) CIRC

- Katherine Hegewisch, UI CIRC

- Hamid Moradkhani, Portland State University Civil & Environmental Engineering

Web page authors: P. Mote, D. Rupp, J. Vano, N. Gilles

Last updated: November 2015

Climate Methods in Brief

Climate Model Evaluation

The World Climate Research Programme’s Coupled Model Intercomparison Project (CMIP) is a worldwide effort to establish a set of standard experimental protocols for the use of general circulation computer models (also called global climate models), or GCMs, in the development of climate scenarios. Essentially, the CMIP project is an attempt by the world’s climate modelers to improve the performance of GCMs, standardize methods of model evaluation, and make GCM outputs directly comparable.

Results from the CMIP project’s latest phase (Phase 5 or CMIP5) began to be available around the time of the launch of WW2100. CMIP5 ushered in a new wave of GCMs and resulting climate data, and was seen by the WW2100’s climate modeling team as an opportunity to both use the new CMIP5 GCMs in WW2100’s work and assess the performance of the CMIP5 models for the United States Pacific Northwest as a whole.

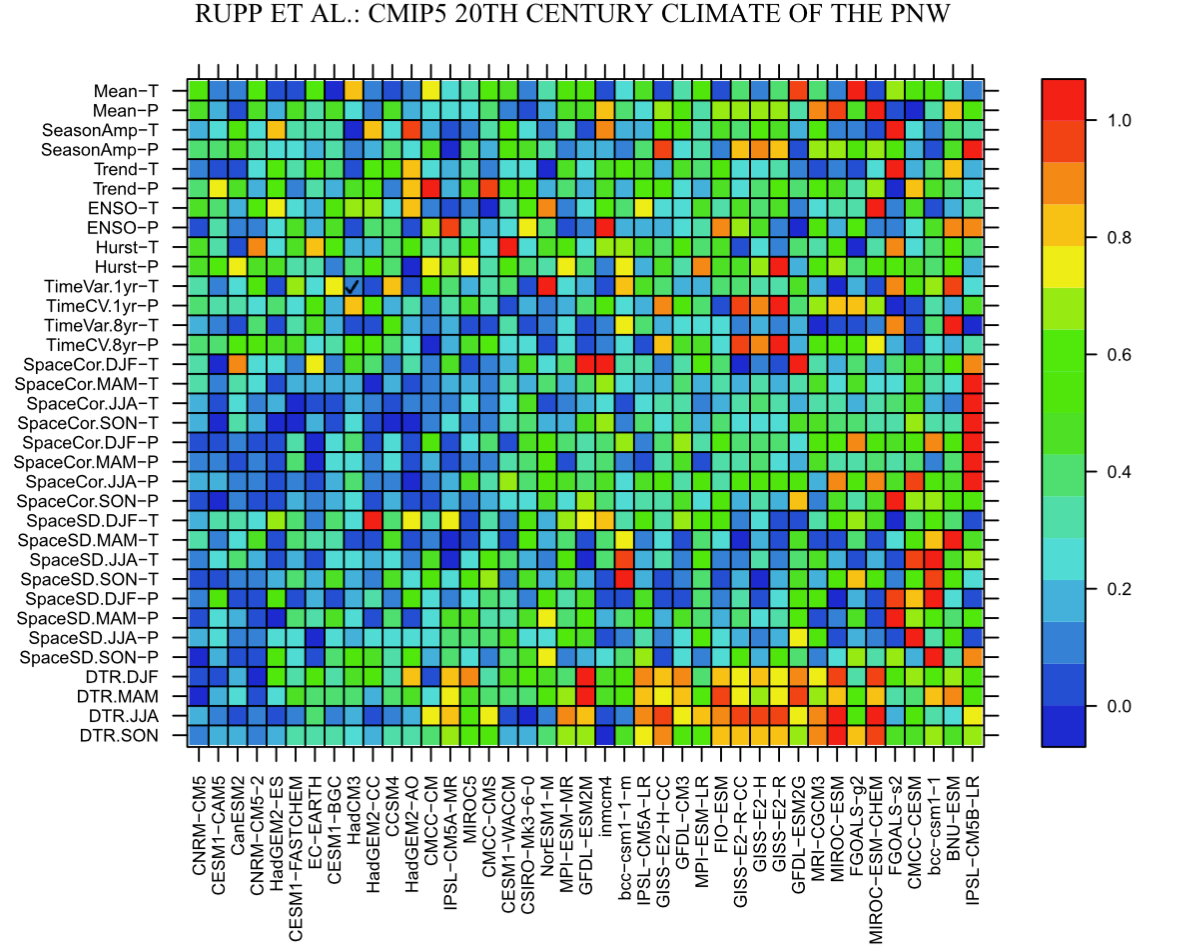

To that end, the WW2100 climate team assessed 41 GCMs from CMIP5 for their ability to simulate various aspects of climate in the Pacific Northwest. The team’s results were published in the Geophysical Research Letters: Atmospheres, where the researchers’ full results and methods can be reviewed. What follows is a summary of the methods employed and how it aided WW2100.

As the WW2100 team stated in their paper, their goal in evaluating the CMIP5 models was “to evaluate model performance in order to make informed recommendations to those who may use these model outputs” (Rupp et al., 2013). The researchers determined these “downstream” users, including resource managers and other scientists assessing climate impacts, would be best served by GCMs that gave the best statistical fit to the observed climate of the Pacific Northwest. (The team defined the Pacific Northwest as the area within longitude 124.5° and 110.5° W and in latitude 41.5° and 49.5° N, or roughly Oregon, Washington, Idaho, and western Montana.)

To find the best fit, the team evaluated the CMIP5 GCMs according to their ability to re-create in computer simulations the observed historical climate of the 20th century. This hindcasting ran from 1850-2005 and focused on temperature and precipitation. Observed climate data were taken from five gridded datasets of monthly means. A suite of statistics, or metrics, were calculated from both the hindcasts and observations and then compared. These metrics included mean seasonal values, interannual variability, amplitude of the seasonal cycle, consistency in spatial patterns, and sensitivity to the El Niño Southern Oscillation, among others. The researchers used two methods for ranking the performance of the GCMs based on these metrics: The first method assigned equal weight to each of the metrics. The second method excluded those metrics that were not considered robust. This involved ranking the metrics under the assumption that certain metrics might be more important in the assessment process. It also included an attempt to avoid redundancy, given that not all metrics are independent of one another.

The result was a ranking of the models according to metrics that led to a subset of GCMs that the team’s methods determined were the best statistical fit for the climate of the Pacific Northwest.

Figure 1. A depiction of the climate model assessment work done for WW2100. Models are listed at the bottom. On the left are meteorological measures, including temperature and precipitation. The graph depicts a relative error, in this case how well the models compare relative to each other when matched against actual historical measures for the Northwest. Here warm colors depict higher degrees of error and cooler colors less error. The models are organized from left (least error) to right (most error). (Image Source: Rupp et al., 2013)

Emissions Scenarios Selection

The biggest certainty in climate science is that increasing greenhouse gas (GHG) concentrations, especially carbon dioxide, are heating the Earth’s atmosphere. Precisely how much regional climate temperatures will increase in response to a given rise in GHGs is not known, which is why climate researchers examine more than one GCM. However, the biggest uncertainty about future climate by the end of the 21st century stems from not knowing just how much GHG human industry will continue to emit.

With their chosen models, the WW2100’s climate team used GCM output (available from the CMIP5 project) for two emission scenarios to incorporate a range of GHG concentration uncertainty. These emission scenarios are known as Representative Concentration Pathways, or RCPs, a category created by researchers convened to support the work of the United Nation’s Intergovernmental Panel on Climate Change (IPCC).

RCPs are the new standard for modeling emission uncertainty. There are four RCPs representing different concentrations of greenhouse gases, or different possible futures based on how much GHGs human industry might emit. These scenarios are: RCP 8.5, RCP 6, RCP 4.5, and RCP 2.6. Here, higher numbers represent a greater degree of radiative forcing (in terms of W m2 over preindustrial levels; e.g., 8.5 = 8.5 W m2) that the scenarios are expected to produce by 2100 (RCP 8.5 is bigger than RCP 6 and so on) and, hence, represents a future scenario with more emissions.

For WW2100, we employed two RCPs: RCP 8.5 (the high emissions scenario that assumes human industry will continue to emit greenhouse gases at a growing rate) and RCP 4.5 (a middle-of-the-road scenario in which emissions will be curbed starting in the middle of this century).

Selecting Representative Climate Scenarios Using a Sensitivity Approach

Resource managers and other downstream users of GCM data often lack the ability to process data from multiple climate models and scenarios, which requires considerable computing resources. This is especially true when considering other types of future scenarios in their impacts work, such as economics, demographics, and land uses, which require still more computing resources. What is often done instead is to select a subset of representative models and scenarios. For WW2100, we selected a subset of three “representative” scenarios, that is scenarios that are representative of GCMs run with the RCPs, the data from which could then be fed into WW2100’s latter modeling.

To find their subset of scenarios, the team conducted a sensitivity analysis in the Willamette River Basin that allowed them to select three representative GCMs from the 33 CMIP5 climate models for which future climate scenarios were available (from the 41 GCMs evaluated in the first step).

The sensitivity analysis used simple perturbation experiments to estimate how changes in temperature and precipitation affect summertime streamflow in the Willamette River Basin. More specifically, using the Variable Infiltration Capacity (VIC) Macroscale Hydrologic Model, we simulated streamflow using historical weather data from 1975-2004. Then, we ran a perturbation: Using the same set up, we ran the hydrologic model again, but with incremental increases in temperature. The percent in which summertime streamflow changes in these perturbation experiments provides an estimate for how sensitive it will be in a warmer climate. The same type of perturbation experiments were then repeated, but this time for incremental precipitation increases and decreases.

From this, we then used these derived sensitivities to draw contours of constant summertime streamflow change on a scatter plot of temperature and precipitation changes in GCM output. With the contours as guides, the team selected GCMs and accompanying RCPs with the objective of spanning a wide range of warming (high, middle, and low) while also spanning a wide range of hydrological impact. Where multiple models were available to choose from in each category (high, middle, low), the team chose one of the better performing GCMs according to the model ranking discussed above.

Figure 2. Representative scenarios selection plot for summertime streamflow change in the Willamette River Basin. Contours represent constant change in streamflow calculated from perturbation experiments. GCM precipitation and temperature changes are based on differences between 1970-1999 and projected changes for the period 2041-2070. Climate models are listed on the right. Models were run using the emissions scenarios RCP 4.5 (low scenario) and RCP 8.5 (high scenario), shown in blue and red respectively. (Image Source: Vano et al., 2015)

From this analysis, three scenarios, now a combination of GCMs that had been run with the two separate RCPs, were ultimately selected.

The final representative selections are: the High Climate Change scenario (the HadGEM2−ES climate model run with RCP 8.5); the Reference Case scenario (the MIROC5 climate model run with RCP 8.5); and the Low Climate Change scenario (the GFDL−ESM2M climate model run with RCP 4.5). Note: GFDL-ESM2M was not ranked highly by performance, but it was selected nonetheless to represent the Low Climate Change scenario, as none of the lowest-warming models were ranked highly. Hereafter the scenarios will be referred to as HighClim, Reference, and LowClim.

The LowClim scenario represents a small temperature increase and small decrease in summertime streamflow (lowest impact). The HighClim scenario represents a large temperature increase and large decrease in summertime streamflows (highest projected impacts). The Reference scenario lies between the two extremes.

It’s worth noting that an additional constraint was placed on the selection process: that all requisite data for a given GCM was available for the downscaling procedure (see section on downscaling below). This constraint ultimately limited the team to choosing from among 20 GCMs.

Downscaling

In order to feed data from the HighClim, Reference, and LowClim scenarios into WW2100’s later modeling work, the team performed a process called downscaling. Downscaling is a means to convert or translate the coarse resolution of GCM grids (which are as large as 375 km, roughly 233 miles, to a side) down to a finer resolution, which for WW2100 was about 4 km, roughly 2.5 miles. This is done to account for the details of local topography and local climate. This adjustment is needed in the Pacific Northwest, which has a complex mountainous topography that does not appear in a detailed form in the GCMs.

For the WW2100, we downscaled data from the HighClim, Reference, and LowClim scenarios for the Willamette Valley. The method used was the Multivariate Adaptive Constructed Analogs (MACA), a downscaling method developed by University of Idaho (UI) researcher John Abatzoglou. Resulting data from the downscaling was then fed into the Envision model’s component models.